Putting Amazon Textract to the test.

A 6 minute read, written by Mike Carter on 17 September 2019.

Amazon Textract extracts text and structured data from scanned or photographed documents, but how reliably can it be used for business process automation? We put it to the test.

We chose a small range of different document types for our tests; a payslip, an invoice, and a passport.

Each document represents a different mix of data structures, ensuring we're not just checking Optical Character Recognition (OCR), but seeing how well Textract can extract labelled values, and full tables of structured information.

In the case of the passport, we've also used a photo instead of a scan to see how well Textract handles lower quality documents.

The resultsPermalink

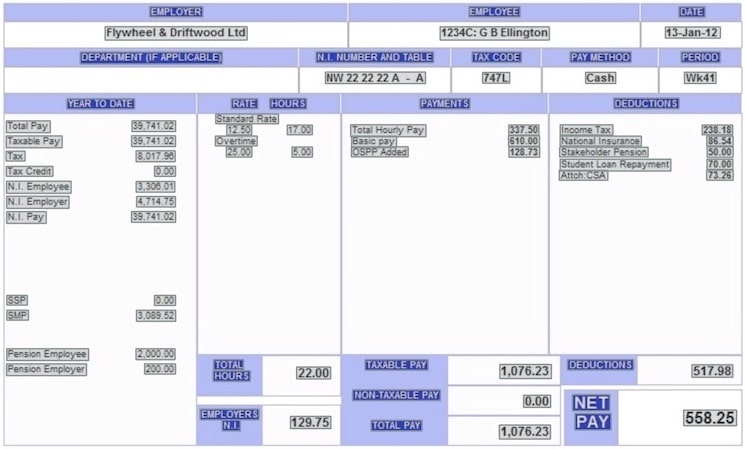

Payslips are heavily structured documents, usually formed by a series of nested tables, with labelled values scattered all over. They provide a good test of Textract's ability to extract structured tabular and labelled information.

For our test payslip, Textract managed to extract every piece of text correctly. What’s more, Textract was able to identify tables shown in the payslip, and correctly associate all labels with their values, e.g. "Total Pay" with "1,076.23". Textract makes all of this structured data available, giving us a range of convenient different ways to handle the extracted information depending on how we want to use it.

InvoicePermalink

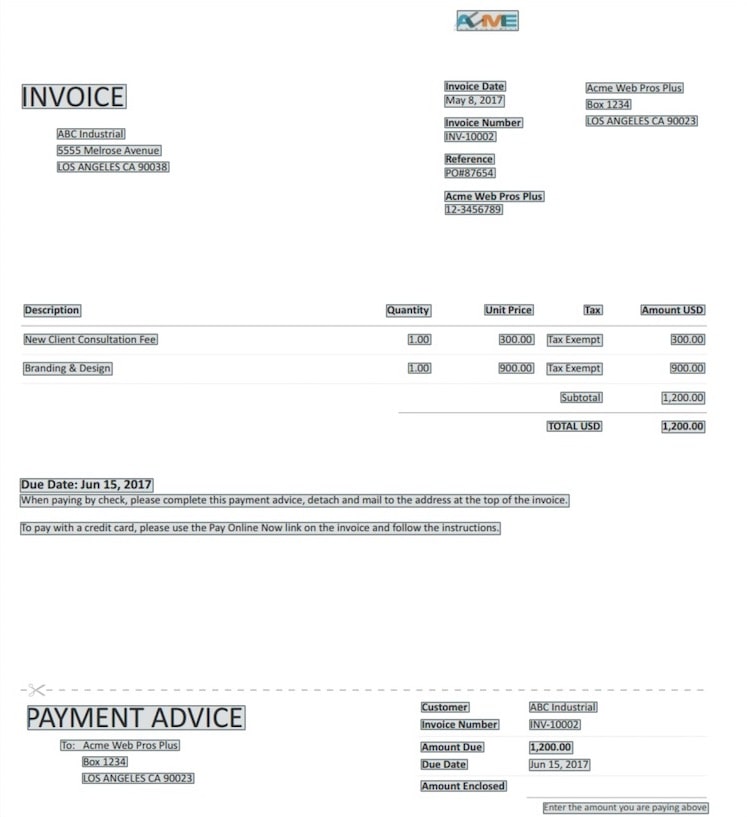

Invoices are typically a semi structured document. They feature a mix of free text with labelled values, and almost always have a table at the centre detailing pricing.

Again, for our test invoice, Textract was able to successfully extract all text correctly, and extract most of the labelled data correctly, including perfectly extracting the table of pricing at the centre.

Although the overall extraction was near perfect, a couple of pieces of text were incorrectly associated with labels. For example, in the bottom right of the invoice, "Amount Enclosed" was incorrectly associated with "Enter the amount you are paying above", when it should actually have been a label without a value.

PassportPermalink

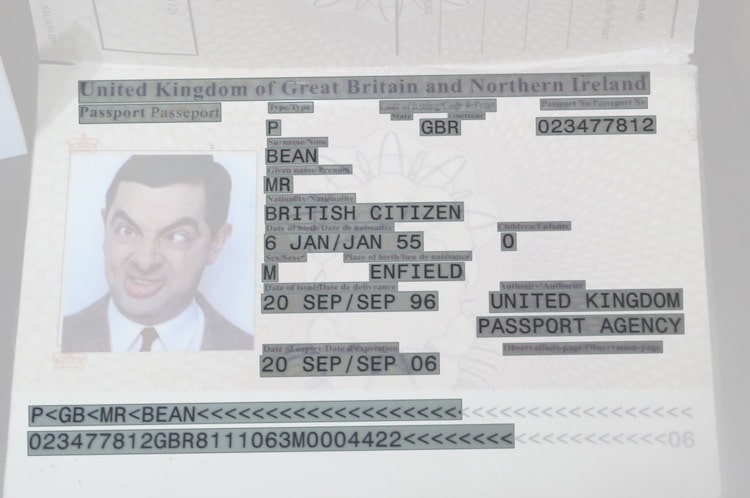

Passports are a very loosely structured document. They contain a lot of densely packed labelled information, with little alignment between values to form any obvious structure. In addition to this, the labels for text are tiny compared to their values. Combined with the fact we're using a photo of a passport rather than a higher quality scan, the passport is a more challenging task for Textract.

Despite the challenge, Textract again did a very good job of extracting information from the passport. Even where the text was small and unclear, it was able to successfully associate labels and values for the most part. It did, however, struggle to correctly parse some of the labels:

- "Date of birth" was incorrectly extracted as "Date or hinthe".

- "Sex" incorrectly was incorrectly extracted as "Sew".

- "Nationality" was incorrectly extracted as "Natioality".

Textract also struggled to correctly associate some labels with values. For example, "Date of birth" was not correctly matched to “6 JAN/JAN 55”.

Despite these failures, Textract coped better than expected with what was essentially a low quality photo of a poorly structured document.

TakeawaysPermalink

Data quality

Amazon Textract does a excellent job at extracting raw unstructured text from documents. Across all three of our tests, Textract was able to correctly extract the contents for all but the smallest, and blurriest of labels in the passport photo.

Where structured data is concerned, Textract usefully associates labels with their values. It was also able to correctly extract tables, even when the table's cells had no visible borders.

Textract appears to struggle a bit more when information is loosely structured, or where documents are provided at a low resolution. However, to combat this, Textract provides a confidence rating for each piece of information it extracts. In your business, you can use this confidence rating to set thresholds for your systems, ensuring only documents you're highly confident of are automatically processed.

A final note on quality - With continued investment from Amazon, Textract is only going to get better over time. Any busines using it will benefit from this for free. So, with a good quality integration, you should expect to increased rates of correctly processed documents over time with no additional effort from you.

Ease of integration

Amazon's documentation for Textract is extremely thorough, and they provide a wide variety of example code to work from to make it as accessible as possible to developers at different experience levels.

If you're an existing Amazon Web Services (AWS) customer, Textract can load documents directly from your Amazon S3 account for processing. Alternatively, your developers can send documents to be processed directly to Textract from any data source if you store your documents elsewhere.

Textract can be integrated into a wide variety of application types — consumer facing websites, business applications, scripted tasks, mobile apps, and web services can all benefit. Typical use cases for Textract include:

- Importing documents and forms into business applications.

- Making documents searchable.

- Building automated document processing workflows.

- Maintaining compliance in document archives.

- Extracting text for Natural Language Processing (NLP).

- Automated document classification.

Benefits to your businessPermalink

If your staff spend a lot of time on manual document processing work, Textract can be used to deliver huge savings, free up your staff to do more valuable work, ensure you're able to process workloads 24/7, and improve the overall efficiency of your business processes.

At Leaf, we specialise in using services like Textract to build digital products & services that automate business. If you want to understand more about how Textract could help you to increase efficiency, productivity, and reliability, don't hesitate to get in touch.